Proustian Camera – Prototyping

Sam Hill

8th January 2013

We’ve been looking at memory – specifically episodic memory – and it’s relationship with experience for a few months now. We’ve spoken with neuroscientists on the science of memory, and Ben has been working on several interventions around the subject. Earlier in the year I first proposed our so-called “Anti-Camera”, or “Scent-camera”. More recently we have come to call it The Proustian Camera (which seems to summarise our intentions most neatly). Ultimately, we hope to develop a device that provides an alternative to conventional cameras, by letting people ‘tag’ events and occasions with a scent, which they can later recreate to aid memory.

The Prototype

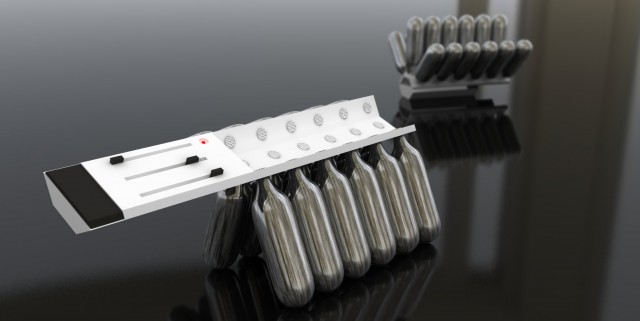

We’re pleased to announce that this week we’ve assembled our first working prototype – a rudimentary, bare-bones version of what we’d like to finally end up building.

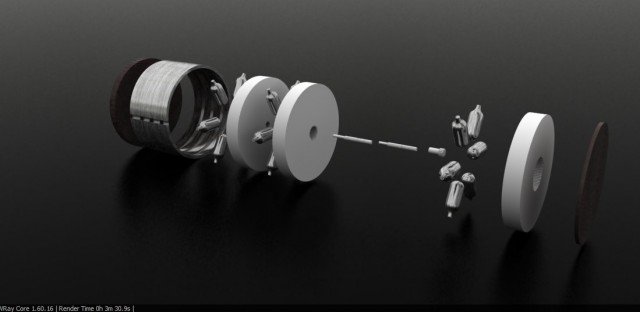

The prototype has 6 scent chambers (the final version will likely have between 18 and 30), and uses piezo-atomisers paired with felt wicks to make the compound scents airborne. Ben gutted the components and electronics from a bunch of Glade “Wisps” (there’s a Make: tutorial on how these can be hacked.) and got a couple of Arduinos inside setup to sync the atomising with the push a of a trigger. The left-most button is a “submit” button, and the rest toggle respective piezos (‘up’ is on, though you can see at the time of the photo they weren’t aligned).

Getting to a Prototype stage

To briefly summarise how we got to where we are now – firstly, we’re very grateful to Odette Toilette, who has valuable experience having worked on some interesting fragrance-based products before, and knows all about the alchemical world of olfaction. She pointed us in the right direction for sourcing the scents we needed, and helped us get our heads around the mechanics of how to make smell-objects work.

We bought thirty of so ingredient scents from Perfumer’s Apprentice, who were very helpful when we explained what we were trying to do. They sent us a bunch of tiny jars with a broad spectrum of concentrated scents in them, ranging from musky base tones to fruity high notes. Stuff like Ethyl Methyl 2-Butyrate (which sort of smells like a banana-flavoured epoxy), Bergamot (citric) and Civetone. Civetone is one of the oldest known ingredients in perfume, and is still present in contemporary fragrances like Chanel No. 5. However in isolation it smells like a prison gymnasium crossed with a festival toilet.

Experiments

We used these scents for a couple of research projects, to see if memory scent tagging would work as neatly as we hoped.

First a “sample group” of colleagues visited two locations: one as a control, and one whilst using a composite scent. Several days later we re-combined the composite scent and tested to see if they could recall the latter location with more clarity than the control.

In the second experiment we took a larger group (some 50 or so Goldsmiths design volunteers) and showed them five youtube music videos, in each case giving them a different composite scent. We wanted to see if they could later recall which scent was linked to which video.

Disappointingly, in both cases we learned that episodic memory is not as discreet as we first hoped. Declaring one “episode” of memory over, and another to have begun is not as straight-forward as we had expected – similar activities, especially if minutes apart, will ultimately “bleed” into one another from the perspective of a participant.

It seems the time frame of a Proustian mark will be associated with a period longer than several minutes. Though both experiments showed a slight trend towards there being a positive correlation between olfactory stimulation and memory, we’ll need to repeat them, spaced over a longer period of time, to glean any usable data. Now at least we can use the prototype in these experiments.

Refining the model

With a working prototype out in the field, we’ve set our sights on designs for a fully-featured iteration. Specifically, we’re working out a form and use-cases to get the right scents in the right place, and with a solid and intuitive user-interface.

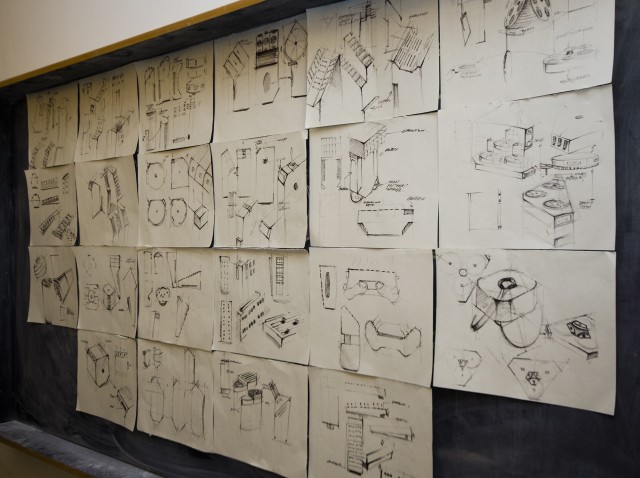

Justas has been rapidly sketching forms to interrogate the object’s potential use. We’re conscious that as a conversation piece, our object may benefit from referencing consumer technology products. We’ve set about exploring forms that both indicate it’s situations of use and it’s scent based nature. We want people to be able to imagine it in their hands and functioning in their lives. Drawing and re-drawing the object, we’re learning about what the object needed to function and how to read as a camera-like object.

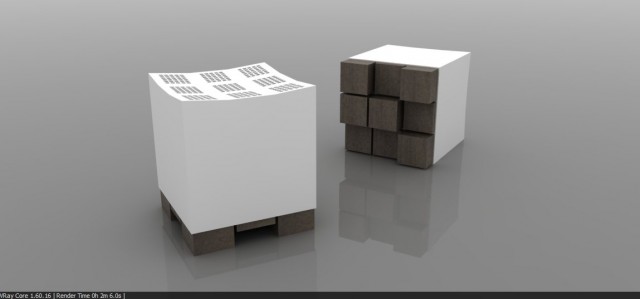

Justas has also explored the form as 3-D render-sketches, again to see it as a finished object and have conversations about how it works and where it sits. We’re now starting to produce physical sketches, which we’ll upload pics of soon.

Next Steps

We still need solid data, demonstrating whether or not our object can do what the scientific theory implies. We’ll use our prototype to explore this. Our use-case explorations have helped us identify three routes, which we’ll be weighing up over the following weeks.

The outcome of this exploration could be:

- A conceptually ‘purist’ approach – a ‘blind’ object with minimal functionality, capable of only two functions: a) emitting a scent for the first time, b) emitting a previous scent-encoding at random

- An assisting device for cameras – something that works in tandem with convention cameras to provide a more holistic memory encoding experience

- A compromise between the two – a legitimate ‘competitor’ to a recollection-through-sight object, but a tool that is sympathetic to the user and provides meta-data (location, time, possibly the ability to tag scents textually) to help them select previous scents.

Once we’ve settled on the use conditions, we’ll get 3D prints made up and install component PCB’s more specifically adapted to our final needs (as opposed to the proto-electronics we’ve been using so far).

(Finally, here’s is a close up of a scent getting atomised, click for more detail:)